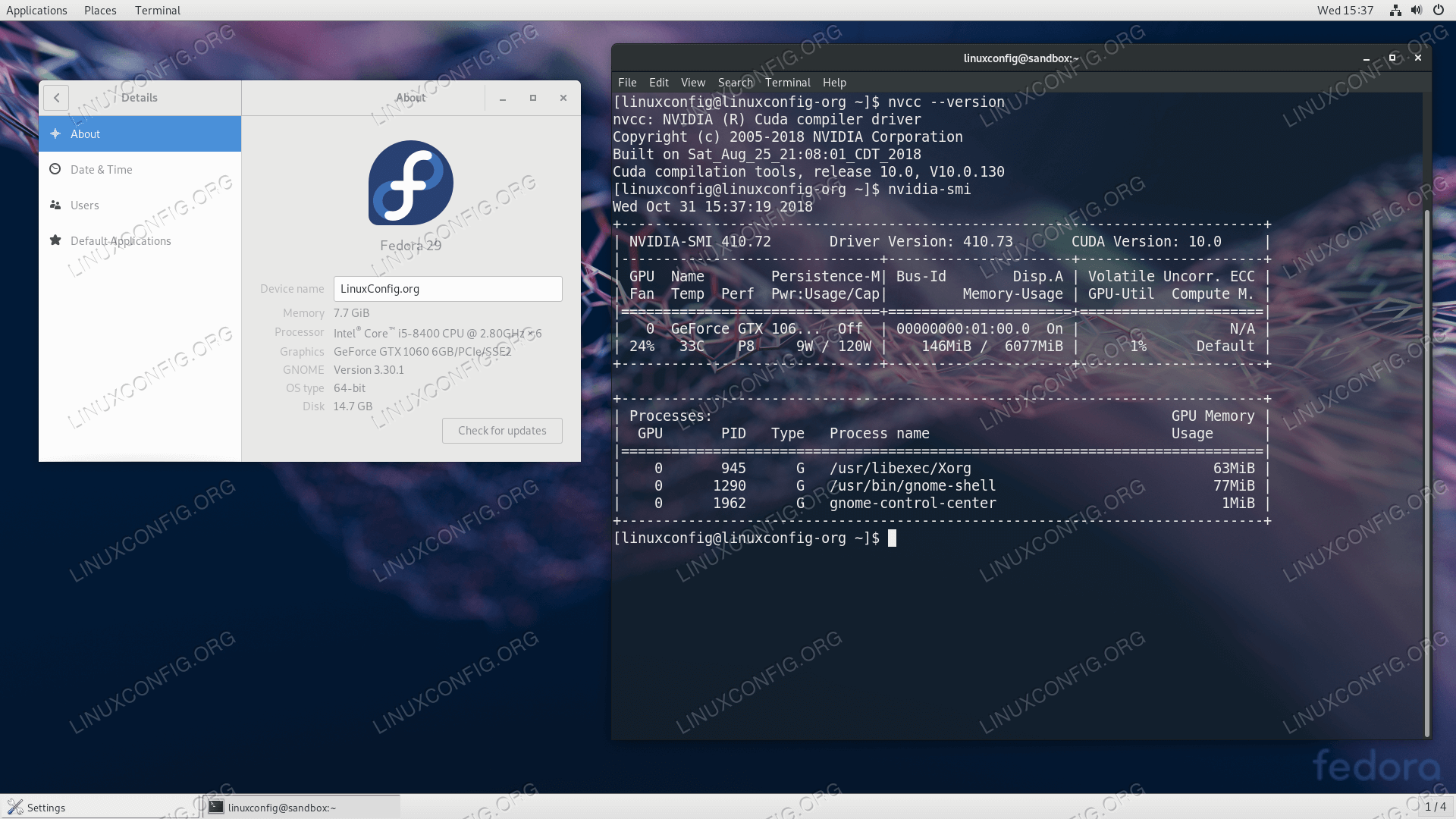

Linux x8664 For development on the x8664 architecture. Linux CUDA on Linux can be installed using an RPM, Debian, Runfile, or Conda package, depending on the platform being installed on.  Now conda itself opens another can of worms: conda is a separate environment than python3s standard pip3, so we have to install all modules used through pip3, again using conda. conda install cuda -c nvidia Uninstallation To uninstall the CUDA Toolkit using Conda, run the following command: conda remove cuda 3. For the full CUDA Toolkit with a compiler and development tools visit. This CUDA Toolkit includes GPU-accelerated libraries, and the CUDA runtime for the Conda ecosystem. Install repository meta-data sudo dpkg -i cuda-repo-Do not use the Ubuntu instructions in this case. These instructions must be used if you are installing in a WSL environment. Once installed, you’ll have access to all of the features of the latest version of the NVIDIA CUDA Toolkit. To uninstall the CUDA Toolkit using Conda, run the following command: conda remove cuda.  To install the toolkit, simply run ‘conda install cudatoolkit’ in your terminal or command prompt. This CUDA Toolkit includes GPU-accelerated libraries, and the CUDA runtime for the Conda ecosystem. The NVIDIA CUDA Toolkit is available from the default Conda channels as well as the NVIDIA Conda channel. Transformers4Rec is a flexible and efficient library for sequential and session-based recommendation and can work with PyTorch. Standard approach recommended is to install it through Miniconda/Anaconda, conda install cudatoolkit. The CUDA Toolkit from NVIDIA provides everything you need to develop GPU-accelerated applications. CUDA is a parallel computing platform and programming model developed by NVIDIA for general computing on graphical processing units (GPUs). Merlin Systems provides tools for combining recommendation models with other elements of production recommender systems (like feature stores, nearest neighbor search, and exploration strategies) into end-to-end recommendation pipelines that can be served with Triton Inference Server.Ī GPU accelerated streaming data library with python bindings

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed